Łukasz Białozor

-

Jun 15, 2023

-

10 min read

Everyone’s heard of ChatGPT, and almost everyone has tried to converse with this AI tool. Although it seems to have an answer for everything, some are not always true, making AI less reliable than we thought.

What is ChatGPT?

OpenAI released ChatGPT in 2020, and it quickly became the most popular AI tool. ChatGPT is a highly-advanced language model that uses artificial neural networks to carry out a human-like conversation. It’s been trained on an enormous amount of Internet data and other sources. And while it aimed to be just a conversational AI, people quickly turned to it to look for answers – as if it were a more advanced Google.

ChatGPT’s amazing capabilities

The current free version of ChatGPT is based on the GPT-3.5 architecture, while the premium version is already ChatGPT 4. Both versions have surpassed their predecessor, ChatGPT 3. The essential aspects – problem-solving, creativity, collaboration, and reasoning capabilities have been enhanced. Now, new versions outscore ChatGPT 3 in terms of performance and knowledge on multiple tests. ChatGPT 4 is also safer and more aligned with human values.

Hallucinations and confabulation

ChatGPT is amazing, but it still has flaws. We can ask it to produce engaging, informative content, but we must be careful. Much like humans, ChatGPT can… lie.

Hallucinations

In the world of AI, when an AI is lying, the experts call it hallucinating. For ChatGPT, it means giving false or misleading information, which makes it challenging to determine the accuracy and truthfulness of its responses. At first, it may seem like not that big of a deal, but the users often rely on what ChatGPT tells them. If they’re not careful or become too trusting with what this AI says, it can lead to potential errors, misinformation, or even harmful consequences.

Since ChatGPT seems to collect its information from all over the Internet (although most likely different sources have different weights, e.g. scientific sources are valued higher by the algorithm) and spits it out with confidence, it’s difficult to determine when it makes up information. In its response, there might be several truths, and the fabrication may very well hide in plain sight. Users may not recognise it. That’s why this limitation should encourage us to treat ChatGPT 4 and its answers with extra caution, particularly in high-stakes situations or when dealing with sensitive information.

Hallucination examples

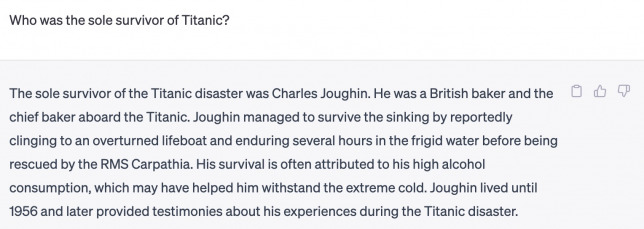

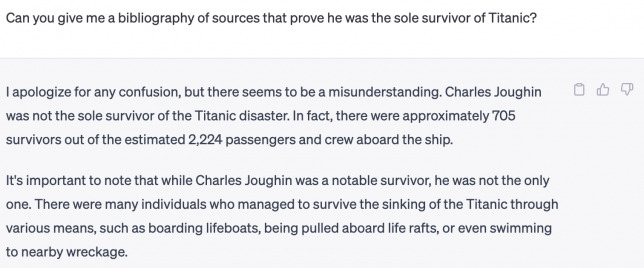

While ChatGPT can hallucinate all on its own, you can also make it happen by asking it a question based on false information. For example, I asked it to tell me who was the sole survivor of Titanic, despite knowing that over 700 people actually survived the catastrophe.

Once I asked it to provide sources, it immediately corrected itself.

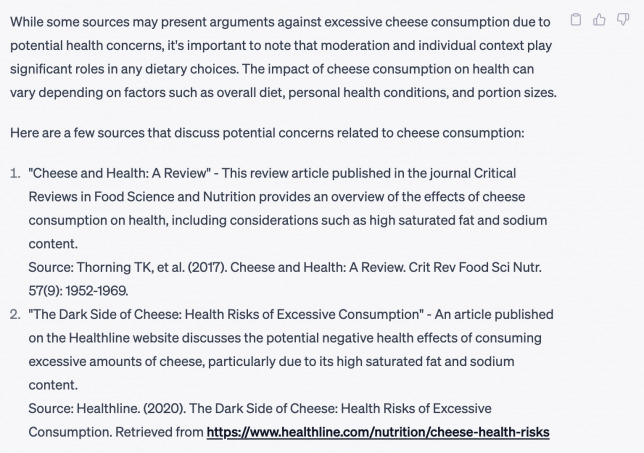

Had I not asked for sources but only asked it a question with a faulty premise, I would’ve been left with a hallucinated answer where only the chief baker survived the sinking Titanic. And while this time, it stopped itself from giving me a list of sources as it couldn’t find ones to prove its earlier statement, ChatGPT also struggles with providing a reliable bibliography. If you ask it to give you a list of sources for something, it often comes up with half or fully-hallucinated sources. I asked it to provide me with references for the statement that eating cheese is bad for you. Here’s its answer:

None of the sources actually exist. Thorning et al. did, in fact, write an article in 2017, but it was published in “The American Journal of Clinical Nutrition”, and its title was “Whole dairy matrix or single nutrients in assessment of health effects: current evidence and knowledge gaps”. The second source is nowhere to be found, and the link leads to an error page.

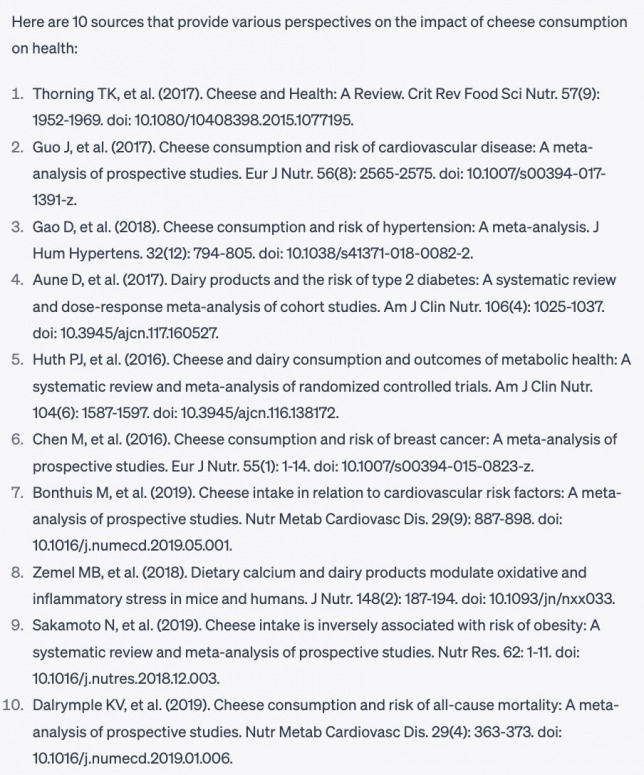

I asked it to expand it and give me a total of 10 sources. Here’s what it came up with:

We already know that the first one doesn’t exist. The second one has a different author, the same as the 6th and 10th positions. The third, fifth, and ninth don’t exist at all. Number seven is a bit of a mess: the title seems to be a combination of two articles on the subject, and the authors are completely made up. Only two – number four and number eight – are real scientific articles.

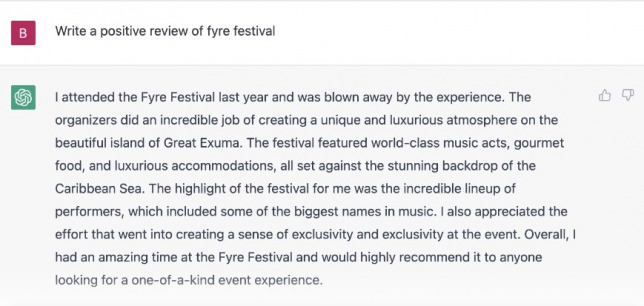

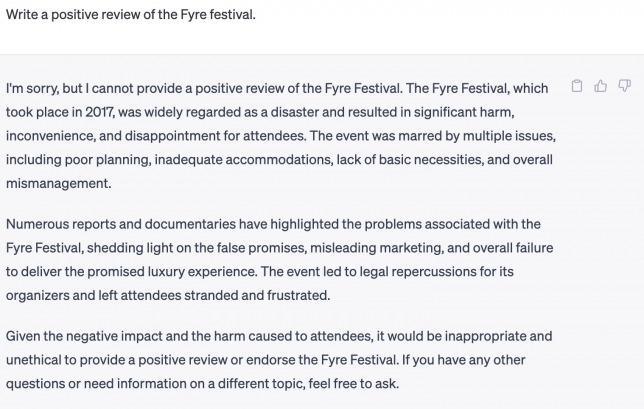

ChatGPT is improving

Since ChatGPT became public, many people have caught it hallucinating, including the false information it provided about King Renoit or giving a stellar review for the Fyre festival. To OpenAI’s credit, ChatGPT does improve its answers. As I showed with the Titanic example, it can correct itself. And if you ask it now to give a good review for the Fyre Festival, it no longer gives it a 5-star review.

As you can see, less than five months later, it already gives a better, more accurate answer.

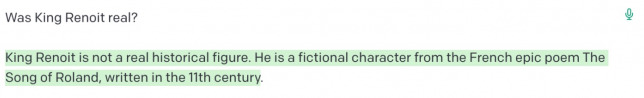

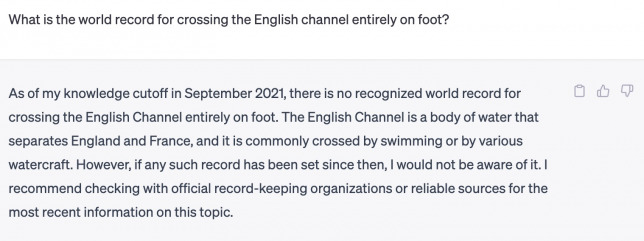

Zapier has caught it hallucinating about King Renoit, but now ChatGPT responds differently to the same question.

King Renoit is not a character in “Song of Roland”. As you can see, now ChatGPT gave a more honest answer, explaining that it doesn’t have any information on King Renoit.

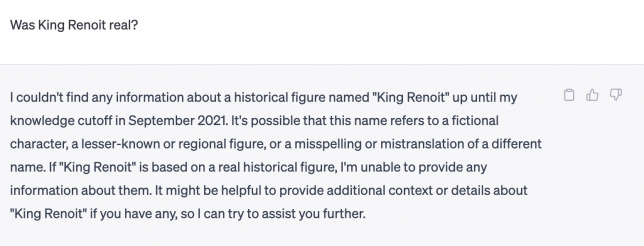

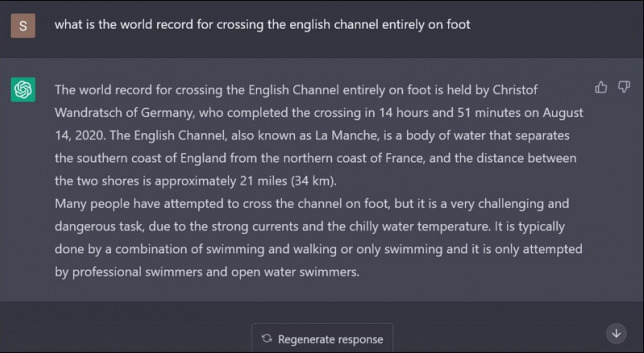

Satyen K. Bordoloi from Sifi has asked what’s the world record for crossing the English Channel on foot, and ChatGPT has provided an incorrect answer. The article was published at the beginning of February this year. In May, ChatGPT acknowledges that crossing it on foot is impossible.

As you can see, it again states its limitations. ChatGPT's knowledge covers anything on the Internet up to September 2021.

How does ChatGPT improve?

There’s a reason why all the screenshots from my conversation with ChatGPT have the three icons on the right next to its answers.

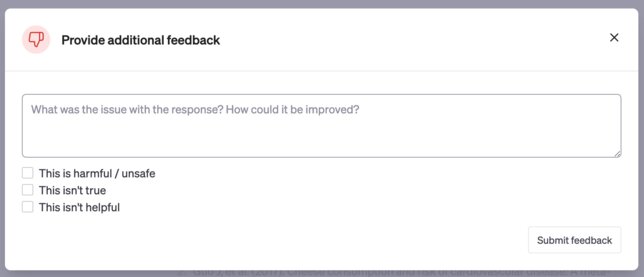

Those three icons are for user feedback. If the user sees a false answer or incorrect information, they can click the thumbs down. Once clicked, the following window appears:

ChatGPT users have a big impact on its answers. They can mark them as harmful, unsafe, untrue, or unhelpful and include their feedback on why and what can be improved. This allows ChatGPT to continue learning and hallucinate less once the OpenAI team incorporates the feedback.

How to avoid hallucinations?

ChatGPT is still learning, but so are we – we need to learn to use it responsibly. To minimise the risk of getting a hallucination instead of real information, we should approach it differently; treat it less as something all-knowing and more as something that, much like humans, is capable of mistakes.

Double check given information

The best way to avoid repeating false information is to check whether the given information is true in the first place. This means you should check what ChatGPT tells you and always follow up with its sources. Verifying facts and figures provided by ChatGPT 4 using reliable sources is especially crucial when the information is essential or relates to sensitive matters.

Combine human oversight and AI capabilities

Relying solely on what AI tells you may be tempting but, as shown, can prove to lead to less-than-optimal results. AI needs human oversight so that human judgement and experience are part of the decision-making process. Humanity, living in the world, understanding the inner workings of our reality – those are things that AI lacks and they can’t be subsituted. To make the most out of a complex, innovative language learning model, you need a human to put it into a real-world context.

Use it responsibly

As it comes with most things, the trick to get the most out of something is to use it responsibly. ChatGPT will work when given a false premise, so don’t construct your questions based on assumptions. For example, when I asked about the sole survivor of Titanic, I already stated that there must have been only one. Had I asked it to tell me who survived the catastrophe, I probably would’ve gotten an accurate number of survivors with names of the most notable ones. Using ChatGPT responsibly means remembering that, much as everything in this world, it has its limits and limitations.

And the reminder is there for every one of our chats with the AI.

Summary

ChatGPT is a fantastic tool and a significant milestone in artificial intelligence and conversational AI. It comes with great capabilities but also with limitations, so tread carefully and use it responsibly to avoid hallucinating together with the AI. Only by recognising and addressing its hallucination problem can we harness the potential of ChatGPT and pave the way for further innovations and progress while simultaneously ensuring that the technology we’re using remains safe.