Piotr Golianek

-

Jun 21, 2024

-

18 min read

Data is key for a better understanding of the market, your target audience, competitors, and business environment.

To better understand this topic and its importance in today’s world, we recommend you check out our article about elevating CX in e-government services.

Now, let’s get back to the main topic of this article. Below is a summary of the research results conducted on a sample of seven government platforms, which were the subject of both qualitative (expert audit) and quantitative (user research) studies.

The summary includes government platforms from:

- Denmark

- Estonia

- France

- Finland

- Poland

- Sweden

- UK

The scope of this comparison was limited to the listed platforms, which were selected based on two criteria: their location in Europe and the availability of a user research sample.

Now, we are presenting you this summary from the perspectives of both the expert audit and the user research results in the four areas examined:

- Findability: How easily and efficiently a user can perform an action.

- Usability: How usable the platforms are based on the SUS rating system in quantitative research.

- Assistance: How helpful the platform is with users who are having technical or process issues.

- Information: How understandable the information structure on the platform is.

In the expert audit, our experts evaluated these areas on a scale from 1 to 5, as you will see further in the article. In the user research, the respondents completed tasks and answered a series of questions to assess these areas.

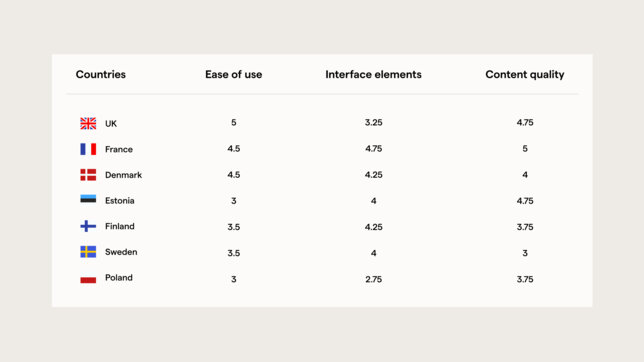

Findability

To make findability easier to understand, we selected three categories as part of the expert audit analysis and, within them, specific areas to compare. Take a look:

- Ease of use (Efficiency)

- Interface elements (Navigation)

- Content quality (User-centeredness)

In the user research, we asked users questions to diagnose the findability of their task set within the government platform they use.

To effectively compare the results of the expert audit and quantitative research, we calculated the average scores from the expert audit for all the selected judging criteria for each country.

Here are the results for each category:

In the user research, the respondents' highest findability scores were recorded in Poland and the UK, which had an average of 4.7 points, suggesting an intuitive experience on their government platforms. Estonia also enjoyed a high score of 4.45 points, while France scored 4.15 points. Next was Sweden, with 4.05, and Denmark with 4.0 points. Conversely, Finland had the lowest score of 3.8 points, suggesting the presence of potential challenges.

When comparing the expert audit and the user research findings, there are certain consistencies between high scores, particularly in the case of the UK and Poland. However, differences, especially in the case of Finland, suggest that there are other factors that may influence the effectiveness of finding information that are not necessarily related to usability or interface quality.

Overall, we observe consistency in some areas, such as high scores in “Efficiency” and “User-centeredness” correlating with high success rates in finding information. However, disparities exist, notably in “Navigation” scores vs success rates, suggesting areas for improvement in interface design. Additionally, age demographics highlight potential usability challenges that could inform targeted improvements to enhance overall findability across different user groups.

Usability

To evaluate the usability of government platforms, the user research respondents answered 10 basic questions based on the SUS rating system. A score below 68 indicates a low level of usability, while scores above 68 are considered good.

Based on the respondents' assessment, three countries exceeded the average of 68 points: Poland (75.29 points), the UK (74.87 points), and France (68.70 points). Denmark, Sweden, and Estonia's scores were below the threshold of 68 points. Finland received the lowest score, with less than 60 points.

— Agata Garstecka and Aleksandra Gońda, UX Designers

— Jakub Stróżyk, UX Researcher

Assistance

To understand experiences with assistance on the government platforms, we selected two criteria for assessment in the expert audit:

- Navigating through the process

- Guidance and support

In the user research, the questions related to these two areas were around:

- Technical assistance with respect to the use of the government website.

- Subject or process assistance after attempting to use the website to find the answer.

In "Navigating through the process," only Poland and the UK were assessed, as parts of the other platforms were inaccessible to researchers. In "Guidance and support," there were three assessment elements, two for each country and one completed only for Estonia, Poland, and the UK.

In the user research, respondents were assessed using chat, email, or telephone assistance. They were asked questions about their experiences divided into two areas—the same as in quantitative research.

Respondents referred to their experiences with assistance rated on a 5-point scale of difficulty, between 1 (very difficult) and 5 (very easy).

The UK received the highest score, 4.06, and was the only country to exceed 4 points. Denmark received the lowest score in this area, 3.54. However, this is still classified as moderately difficult and easy. The rating for other countries is closer to 4 points, which may suggest that accessing and using technical support was easy.

Surveyed citizens who had used support for subject or process assistance also usually gave a score of 4 points. In each of the surveyed countries, a rating of 4 points was given in over 40% of the responses. However, much more frequently than in the assessment of technical assistance, there were ratings at the 3-point level, which indicates that from the perspective of the respondents, this assistance was or could be moderately difficult.

When averaging the two different assistance scores, none of the countries exceeded 4 points. The UK was close, with a score of 3.90 points. Denmark received the lowest score in this case, with an average score of 3.34 points, which can be interpreted as moderately difficult.

The area of assistance provided the greatest discrepancies between the expert audit and user research results. These discrepancies result from the expert audits being based on the categories and subcategories indicated in the scoresheet, whereas the surveyed users based their assessment on their general experience.

Summarising the expert audit scores for "Navigating through the process and guidance and support," the UK was rated the best. The UK was also rated the highest in the user research. Which makes it a winner in this category.

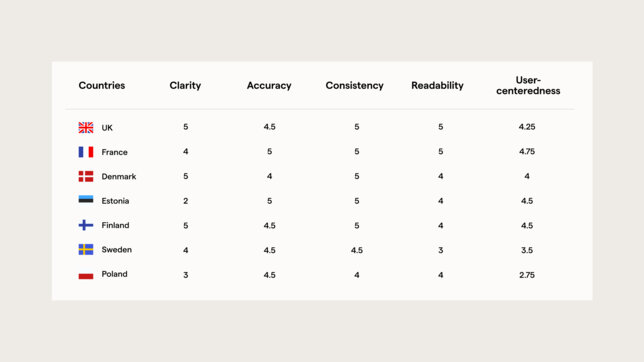

Information

To assess “Information,” we selected 5 categories as part of the expert audit:

- Clarity

- Accuracy

- Consistency

- Readability

- User-centeredness

To effectively compare the expert audit's results with those of the user research, we calculated the average scores from the expert audit for all the selected subcategories.

In the user research, respondents were asked to assess how much they agreed with the statement:

The content on the government website provides the necessary information on the topics/areas of civic issues I am searching for.

They were asked to rate it on a scale from 1 to 5.

Respondents from the UK (4.35 points), Poland (4.25 points), and Estonia (4.15 points) most often agreed with the statement. On the other side of the scale, Denmark residents gave their website the lowest score (3.35 points). In comparison, France (3.95 points) exhibited a slightly higher agreement rate than Denmark. However, both France and Denmark show a lower agreement rate compared to countries like the UK, Poland, and Estonia.

Sweden's relatively high agreement rate of 3.6 points falls slightly below the average agreement rate mentioned above. Similarly, Finland shows a lower agreement rate of 3.7 points, indicating a moderate level of satisfaction.

Overall, while most countries demonstrate a considerable level of agreement with the statement, there are variations across different nations, suggesting varying levels of satisfaction with the accessibility and comprehensiveness of civic information on government websites.

Key takeaways & recommendations

Now, after describing all of the details from our report and showcasing how the best public platforms perform on different levels, it is time to take all of the most important information out of this article and give you a better understanding of how you can create a public platform that will most definitely meet your user's expectations.

Let’s start with the key takeaways that will help you organise all of the information we gathered in this article.

- Consistency and disparities: While countries like the UK and Poland demonstrated alignment between expert audit scores and user research results, others such as Finland and Denmark exhibited significant discrepancies, highlighting the complexity of user satisfaction.

- Findability: The UK's and Poland's government platforms scored highly in findability across both expert audits and user research, whereas Finland showed notable challenges despite high expert scores in certain areas.

- Usability: High usability scores from users in Poland and the UK contrasted with lower scores in Denmark, Sweden, and Finland, emphasising a need for better user-centric design and assistance.

- Assistance: The UK excelled in providing technical and subject/process assistance, while Denmark received lower scores, indicating a need for improved user support systems.

- Information: User satisfaction with the information provided on government websites varied. Estonia showed a strong alignment between expert assessments and user feedback, while Denmark and France revealed gaps despite high expert scores.

Moving further, after organising the knowledge we have served you, let us share with you some of our best actionable recommendations that you can use to create exceptional public platforms.

- Continuous user feedback integration: Implement mechanisms for regular user feedback collection to continually assess and adapt to user needs and preferences. User surveys, feedback forms, and usability testing should be standard practices.

- User-centric design improvements: Focus on enhancing navigation and interface elements to address findability issues. This includes simplifying menus, improving search functionality, and ensuring consistency in content presentation.

- Enhance assistance services: Improve technical and process-related assistance by integrating comprehensive help resources such as FAQs, chatbots, and live support. It is crucial to train support staff to handle diverse user queries efficiently.

- Tailored information delivery: Ensure that the information provided on government websites is not only clear and accurate but also tailored to the specific needs of different user demographics. Conduct targeted research to understand and address the informational needs of various user groups.

- Benchmarking and best practices: Regularly benchmark against high-performing countries like the UK and Estonia to identify and adopt best practices in digital service delivery. Learning from their successes can guide improvements in other countries.

- Cross-functional collaboration: Encourage collaboration between UX designers, content creators, and policymakers to create a cohesive strategy for digital service improvement. This interdisciplinary approach can help bridge gaps between expert assessments and user experiences.

- Focus on accessibility: Prioritize making government platforms accessible to all users, including those with disabilities. Implementing WCAG (Web Content Accessibility Guidelines) standards can significantly enhance usability for a broader audience.

By implementing these recommendations, government platforms can better meet user expectations, enhance overall satisfaction, and ensure that digital services are effective and user-friendly across different national contexts.

If you need any help with your platform and would like to take it to the whole another level, feel free to send us a message with a description of your project.

Summary

When comparing the results of the expert audit and the user research, there is a general alignment between the two methodologies, particularly evident in countries like the UK and Poland. However, variations in responses across different countries highlight the importance of considering cultural and contextual factors that may influence user perceptions and experiences. The findings underscore the need for ongoing analysis and potential adjustments to digital services to ensure they effectively meet user needs and expectations across diverse socio-cultural contexts.

Notably, countries such as Denmark and Sweden, despite scoring well in expert content quality audits, exhibited lower agreement rates from users regarding the sufficiency of information on their government websites. From that perspective, it’s possible that while the content may be deemed high-quality in terms of clarity, accuracy, and consistency, it might not fully address the specific informational needs or preferences of users in these countries.

Conversely, Estonia, which scored relatively high in both the expert audit and user research, indicates a stronger alignment between expert evaluations and user perceptions. This suggests that the content provided on government websites in Estonia not only meets high-quality standards but also effectively caters to the informational needs and expectations of its users, resulting in higher user satisfaction and agreement rates.

Finland performed moderately well in the expert audit but exhibited lower agreement rates in the user research. This could indicate potential gaps between perceived content quality and user satisfaction with the provided information. Further analysis would be required to identify underlying factors contributing to this discrepancy and implement targeted improvements to enhance the overall user experience.

Overall, these comparisons underscore the complexity of assessing and optimising digital content for government websites. While qualitative evaluations from expert audits provide valuable insights into content quality, user-centredness, and overall effectiveness, quantitative surveys offer a direct measure of user satisfaction and perceptions. By integrating findings from both methodologies and considering specific country contexts, policymakers and website developers can better tailor digital services to meet citizens' diverse needs. As a result, they can improve accessibility, usability, and satisfaction with government websites across different national contexts.

To learn more and explore more insights about public platforms, download our recent report: “State of CX in e-government platforms”.